S.L. Benfica—Portugal’s top football team and one of the best teams in the world—makes as much money from carefully nurturing, training, and selling players as actually playing football.

Football teams have always sold and traded players, of course, but Sport Lisboa e Benfica has turned it into an art form: buying young talent; using advanced technology, data science, and training to improve their health and performance; and then selling them for tens of millions of pounds—sometimes as much as 10 or 20 times the original fee.

Let me give you a few examples. Benfica signed 17-year-old Jan Oblak in 2010 for €1.7 million; in 2014, as he blossomed into one of the best goalies in the world, Atlético Madrid picked him up for a cool €16 million. In 2007 David Luiz joined Benfica for €1.5 million; just four years later, Luiz was traded to Chelsea for €25 million and player Nemanja Matic. Then, three years after that, Matic returned to Chelsea for another €25 million. All told, S.L. Benfica raised more than £270 million (€320m) from player transfers over the last six years.

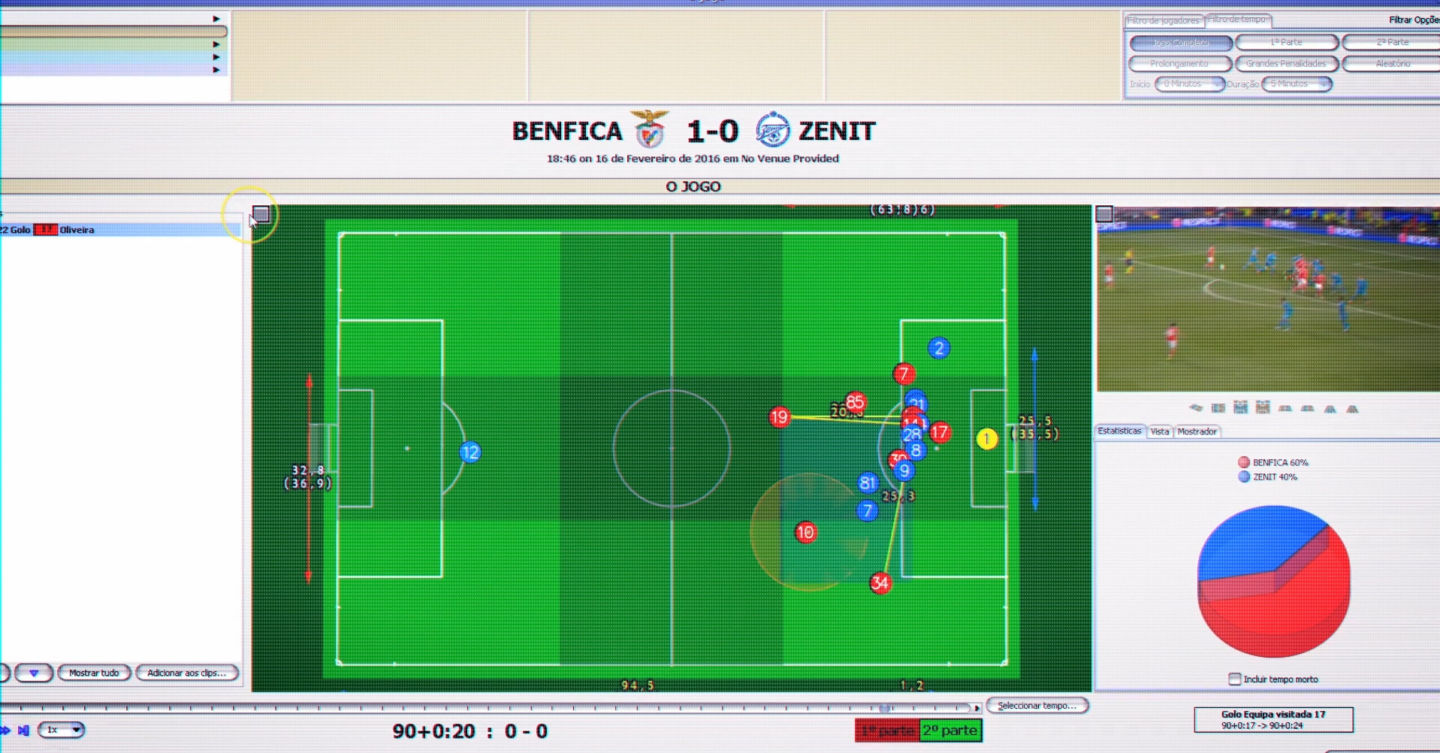

At Benfica’s Caixa Futebol Campus there are seven grass pitches, two artificial fields, an indoor test lab, and accommodation for 65 youth team members. With three top-level football teams (SL Benfica, SL Benfica B, and SL Benfica Juniors) and other youth levels below that, there are over 100 players actively training at the campus—and almost every aspect of their lives is tracked, analyzed, and improved by technology. How much they eat and sleep, how fast they run, tire, and recover, their mental health—everything is ingested into a giant data lake.

With machine learning and predictive analytics running on Microsoft Azure, combined with Benfica’s expert data scientists and the learned experience of the trainers, each player receives a personalized training regime where weaknesses are ironed out, strengths enhanced, and the chance of injury significantly reduced.

Sensors, lots of sensors

Before any kind of analysis can occur, Benfica has to gather lots and lots of data—mostly from sensors, but some data points (psychology, diet) have to be surveyed manually. Because small, low-power sensors are a relatively new area with lots of competition, there’s very little standardization to speak of: every sensor (or sensor system) uses its own wireless protocol or file format. “Hundreds of thousands” of data points are collected from a single match or training session.

Processing all of that data wouldn’t be so bad if there were just three or four different sensors, but we counted almost a dozen disparate systems—Datatrax for match day tracking, Prozone, Philips Actiware biosensors, StatSports GPS tracking, OptoGait gait analysis, Biodex physiotherapy machines, the list goes on—and each one outputs data in a different format, or has to be connected to its own proprietary base station.

Benfica uses a custom middleware layer that sanitises the output from each sensor into a single format (yes, XKCD 927 is in full force here). The sanitised data is then ingested into a giant SQL data lake hosted on the team’s own data centre. There might even be

a few Excel spreadsheets along the way, Benfica’s chief information officer Joao Copeto tells Ars—”they exist in every club,” he says with a laugh—but they are in the process of moving everything to the cloud with Dynamics 365 and Microsoft Azure.

Once everything is floating around in the data lake, maintaining the security and privacy of that data is very important. “Access to the data is segregated, to protect confidentiality,” says Copeto. “Detailed information is only available to a very restricted group of professionals.” Benfica’s data scientists, which are mostly interested in patterns in the data, only have access to anonymised player data—they can see the player’s position, but not much else.

Players have full access to their own data, which they can compare to team or position averages, to see how they’re doing in the grand scheme of things. Benfica is very careful to comply with existing EU data protection laws and is ready to embrace the even-more-stringent General Data Protection Regulation (GPDR) when it comes into force in 2018.

via Did you enjoy this article? Then read the full version from the author’s website.