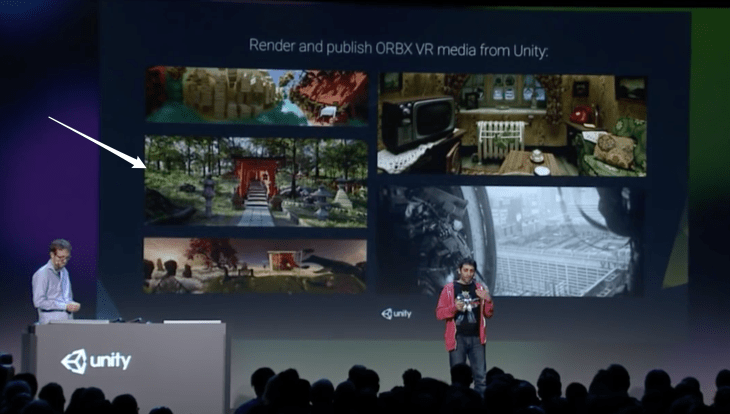

At VRLA this past month, I had the opportunity to see first-hand how the technology gap is closing in terms of photorealistic rendering in virtual reality. Using the ODG R-9 Smartglasses, Otoy was showing a CG scene rendered using Octane Renderer that was so realistic I couldn’t tell whether or not it was real. The ORBX VR media file that results when you build a scene using Octane can be played back at 18K on the GearVR. Unity and Otoy are actively working to integrate their rendering pipeline in the Unity2017 release of the engine. And in short, with a light-field render option, you can move your head around if the device’s positional tracking allows for it.

Octane is an unbiased renderer. In computer graphics, unbiased rendering is a method of rendering that does not introduce systematic errors or distortions in the estimation of illumination. Octane became a pipeline mainly used for visualization work, everything from a tree to a building for architects, in the early 2010s. About 13 years ago, Sony Pictures Imageworks enabled VFX knowledge that is coming to VR content from Magnopus. How VR, AR, & MR Are Driving True Pipeline Convergence, Presented by Foundry

change of pace with the voxel, so named as a shortened form of “volume element”, is kind of like an atom. It represents a value on a regular grid in three-dimensional space.

This is analogous to a texel, which represents 2D image data in a bitmap (which is sometimes referred to as a pixmap). As with pixels in a bitmap, voxels themselves do not typically have their position (their coordinates) explicitly encoded along with their values. Instead, the position of a voxel is inferred based upon its position relative to other voxels (i.e., its position in the data structure that makes up a single volumetric image). In contrast to pixels and voxels, points and polygons are often explicitly represented by the coordinates of their vertices. A direct consequence of this difference is that polygons are able to efficiently represent simple 3D structures with lots of empty or homogeneously filled space, while voxels are good at representing regularly sampled spaces that are non-homogeneously filled. [1]

Within the last year, I’ve seen Google’s Tango launch in the Lenovo Phab 2 Pro (and soon the Asus ZenFone AR), Improbable’s SpatialOS Demo Live, Otoy’s ODG R-9’s at Otoy’s booth at VRLA of an incredibly realistic scene completely rendered using some form of point cloud data.

“The ability to more accurately model reality in this manner should come as no surprise, given that reality is also voxel based. The difference being that our voxels are exceedingly small, and we call them subatomic particles.”

[1] Wikipedia : Voxel

from Pocket

via Did you enjoy this article? Then read the full version from the author’s website.