The hype around generative AI makes it sound like companies simply “turn on” AI and watch the magic happen. In reality, deploying AI inside large organizations is messy, technical, and deeply human.

Through conversations with builders working on enterprise AI deployments, a clearer picture emerges of what the work actually looks like on the ground.

The Marketing vs. Product Reality Gap

AI companies are still figuring out how to talk about what they build. Marketing teams often experiment with language to position their platforms at conferences and events, while product teams sometimes cringe at the terminology being used.

Part of the challenge is that the industry itself is evolving in real time. Everyone—from engineers to executives—is still forming opinions about how AI systems should be described, positioned, and sold. The result is a constant negotiation between what sounds compelling on stage and what accurately reflects the underlying technology.

The Rise of the “Forward-Deployed” Builder

A growing role in enterprise AI is the forward-deployed product or engineering role. These builders sit somewhere between product manager, consultant, and engineer.

Instead of building software in isolation, they embed themselves in real customer deployments. Their job is to translate a customer’s operational reality into something the platform can support.

Typical responsibilities include:

- Understanding a customer’s existing workflows and systems

- Designing how AI agents integrate into those environments

- Testing and validating that systems behave correctly in production scenarios

- Acting as the bridge between engineering teams and enterprise stakeholders

Each large deployment often involves a small pod—engineers and product specialists—working closely with a single customer.

Enterprise AI Is Still Mostly Strategy

One surprising reality: many large organizations want AI but don’t yet know what to do with it.

Companies frequently arrive with enthusiasm but little internal expertise. In many cases, a single internal champion pushes the initiative forward without a broader AI team behind them.

As a result, AI vendors often end up helping customers define their strategy before they even implement anything. Conversations quickly shift from “Can we deploy AI?” to questions like:

- Where in our workflows does automation actually make sense?

- What customer interactions should remain human?

- How do we measure success for an AI agent?

For many enterprises, AI adoption begins as a strategic exercise rather than a purely technical one.

The Hidden Work: Testing, QA, and Reliability

Much of the real work in AI deployments isn’t glamorous.

Ensuring that AI systems behave reliably—especially in customer-facing contexts—requires extensive testing and quality assurance. Teams spend significant time verifying that agents respond correctly across thousands of scenarios.

For example, a customer-service AI agent might need to correctly handle thousands of variations of a simple request like “Where is my order?” — across different policies, authentication states, and edge cases. Each of those paths needs to be validated before automation can be trusted.

In practice, this means:

- validating conversation flows

- testing edge cases and failure modes

- ensuring integrations work across multiple internal systems

- implementing human-in-the-loop review processes before deployment

The goal is often to handle the “happy path” of common interactions reliably before expanding to more complex scenarios.

Platform vs. Services: A Tension in the Industry

Another emerging tension in AI companies is the balance between building a scalable platform and delivering hands-on services to customers.

Enterprise AI deployments frequently require significant customization and implementation support. Investors and product leaders, however, typically prefer companies that scale through product rather than services.

Many companies are navigating this hybrid model:

a product platform at the core, with a services layer that helps customers adopt it.

Over time, the hope is that repeated deployments lead to reusable templates and more standardized implementations.

The Next Wave: Personalized Software

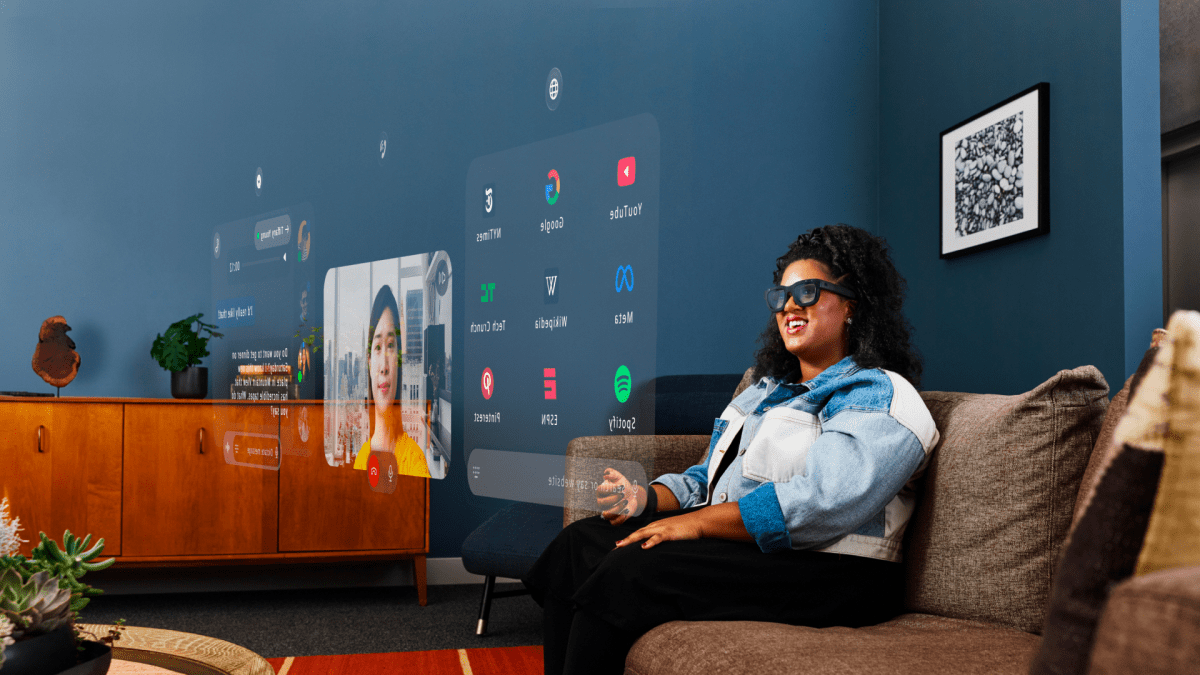

One theme that keeps emerging across the AI ecosystem is the idea that we’re entering an era of personalized software.

Instead of buying rigid tools, organizations may soon generate or customize applications tailored to their exact workflows. Early experiments already show teams rapidly building demo environments and prototypes for customers using generative development tools.

If this trend continues, the biggest shifts in the next few years may not be just smarter models—but entirely new ways software gets built and delivered.

The Takeaway

The current wave of enterprise AI isn’t just about models or algorithms. It’s about integrating intelligent systems into messy real-world environments.

That requires a new kind of builder—part product thinker, part engineer, part strategist—working directly alongside customers to turn AI from a concept into something that actually works.

The hype cycle is loud.

But the real work of enterprise AI is happening quietly — inside deployment pods, QA spreadsheets, and integration docs.