Meta

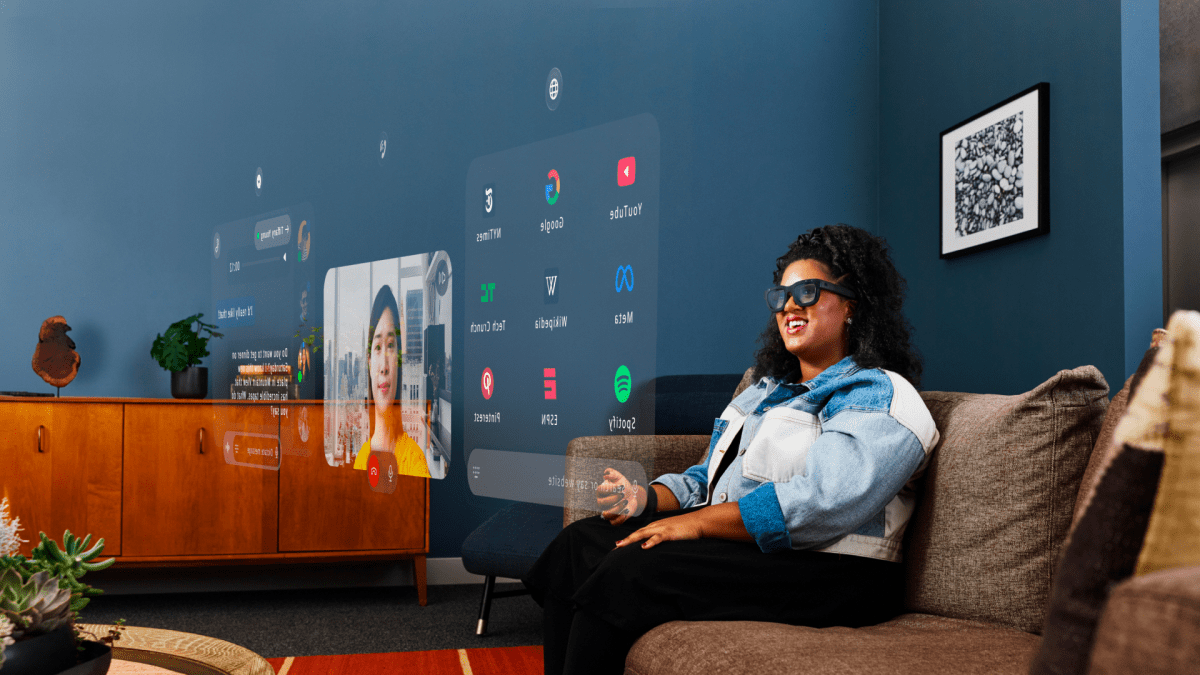

- Five years ago, plans were announced to create AR glasses to blend the digital and physical worlds.

- Orion, the latest AR glasses from Meta, aims to enhance presence, connectivity, and empowerment.

- AR glasses offer unrestricted digital experiences with large holographic displays.

- Contextual AI integration allows for proactive addressing of user needs.

- Orion stands out for its lightweight design, indoor/outdoor versatility, and emphasis on interpersonal interactions.

- Orion represents a significant advancement in AR glasses technology, combining wearability with advanced features.

- The groundbreaking AR display in Orion offers immersive experiences and a wide field of view.

- Orion’s unique design maintains a glasses-like appearance, allowing users to see others’ expressions.

- Orion’s capabilities include smart assistant integration, hands-free communication, and immersive social experiences.

- While not yet available to consumers, Orion serves as a polished product prototype for future AR glasses development.

OP: Introducing Orion, [Meta’s] First True Augmented Reality Glasses

Snap

Sophia Dominguez, the Director of AR Platform at Snap, discussed Snap Spectacles and the company’s AR initiatives at the Snap Lens Fest original post here.

- Snap Spectacles can be connected to a battery pack for extended use beyond the standard 45 minutes, with a focus on B2B interactions that directly engage consumers.

- There is a push for Snap to collaborate with various businesses, such as those in location-based entertainment or museums, to expand the Snap Spectacles ecosystem.

- Sophia Dominguez has been involved in AR for over a decade, starting with Google Glass, and now oversees developers and partners creating lenses on Snapchat.

- Snap’s approach to AR emphasizes personal self-expression as a catalyst for AR lenses, transitioning to world-facing AR lenses like those in Snap Spectacles.

- Snap’s long-term vision is to make AR ubiquitous and profitable for developers, aiming to integrate digital objects seamlessly into the real world.

- Snap’s focus on consumer-level AR use cases includes self-expression as a core feature, offering a variety of options for users to engage with AR content.

- Snap’s AR platform also caters to enterprise and B2B applications, collaborating with stadiums, museums, and other businesses for unique AR experiences beyond consumer-facing lenses.

- Snap’s technology, like Snapchat cam, is designed for venues to integrate into large screens or jumbotrons, focusing on consumer desires for virality and joy rather than just enterprise solutions.

- The company aims to increase ubiquity by making lenses fun and approachable, partnering with entities like the Louvre to explore augmented reality possibilities in a consumer-friendly manner.

HTC Vive has delved into location-based entertainment more than Meta, and Snap is prioritizing connected experiences, ensuring fast connectivity and optimizing for various use cases like museum activations. - Snap collaborates closely with developers, offering grants and support without strings attached to foster innovation in the augmented reality space, aiming to be the most developer-friendly platform globally.

- Snap’s Spectacles have evolved over the years, from simple camera glasses to AR display developer kits, with the latest fifth generation focusing on wearability, developer excitement, and paving the way for consumer adoption.

- The company has revamped Lens Studio to encompass mobile and Spectacles lenses, emphasizing ease of use and spatial experiences, aiming to create a seamless ecosystem for developers across different platforms.

- Snap values feedback and collaboration with developers, striving to provide pathways for monetization and support for creators building on both mobile and Spectacles platforms.

- Snap’s Spectacles offer a unique immersive experience, leveraging standalone capabilities and spatial interactions, aiming to enable emergent social dynamics and experiences not possible on other devices.

- Developers are considering the length of time users spend on devices like Zoom calls or workouts, with a focus on creating a seamless experience for users on the go.

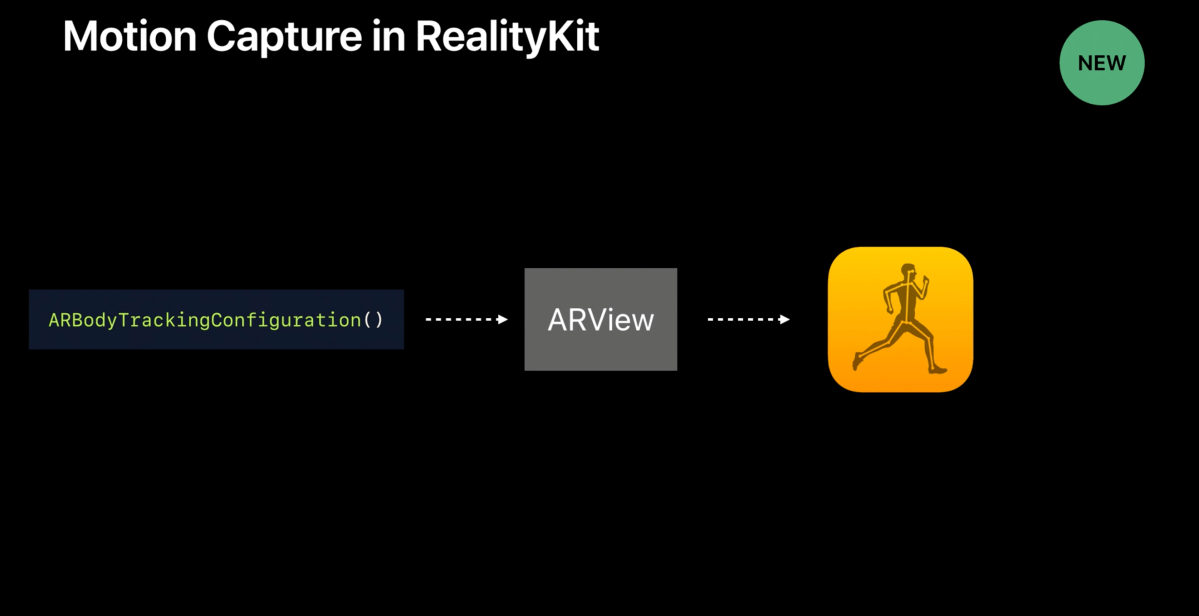

- The new SnapOS manages a dual processing architecture for Spectacles, with Lens Studio being the primary pipeline for developers to create content for the device.

- Snap is actively listening to developer feedback and working on enabling WebXR on Spectacles to support a variety of use cases and experiences.

- The operating system for Spectacles includes features like connected lenses, hands and voice-based UI, and social elements out of the box to facilitate easier development.

- The ultimate potential of spatial computing is envisioned as a way to break free from the limitations of screens, allowing for more natural interactions and connections in the real world.

- Snap aims to empower developers to explore the possibilities of augmented reality and spatial computing, emphasizing ease of use and continuous improvement based on user feedback.

Temporary Minimum Risk Route (TMRR):

Temporary Minimum Risk Route (TMRR):