Apple analyst Ming-Chi Kuo claiming in his latest report that two of the 2020 iPhones will feature a rear time-of-flight (ToF) 3D depth sensor for better augmented reality features and portrait shots, via MacRumors.

“It’s not the first we’ve heard of Apple considering a ToF camera for its 2020 phones, either. Bloomberg reported a similar rumor back in January, and reports of a 3D camera system for the iPhone have existed since 2017. Other companies have beaten Apple to the punch here, with several phones on the market already featuring ToF cameras. But given the prevalence of Apple’s hardware and the impact it tends to have on the industry, it’s worth taking a look at what this camera technology is and how it works.

What is a ToF sensor, and how does it work?

Time-of-flight is a catch-all term for a type of technology that measures the time it takes for something (be it a laser, light, liquid, or gas particle) to travel a certain distance.

In the case of camera sensors, specifically, an infrared laser array is used to send out a laser pulse, which bounces off the objects in front of it and reflects back to the sensor. By calculating how long it takes that laser to travel to the object and back, you can calculate how far it is from the sensor (since the speed of light in a given medium is a constant). And by knowing how far all of the different objects in a room are, you can calculate a detailed 3D map of the room and all of the objects in it.

The technology is typically used in cameras for things like drones and self-driving cars (to prevent them from crashing into stuff), but recently, we’ve started seeing it pop up in phones as well.”

The current state of ARKit 3 and an observation

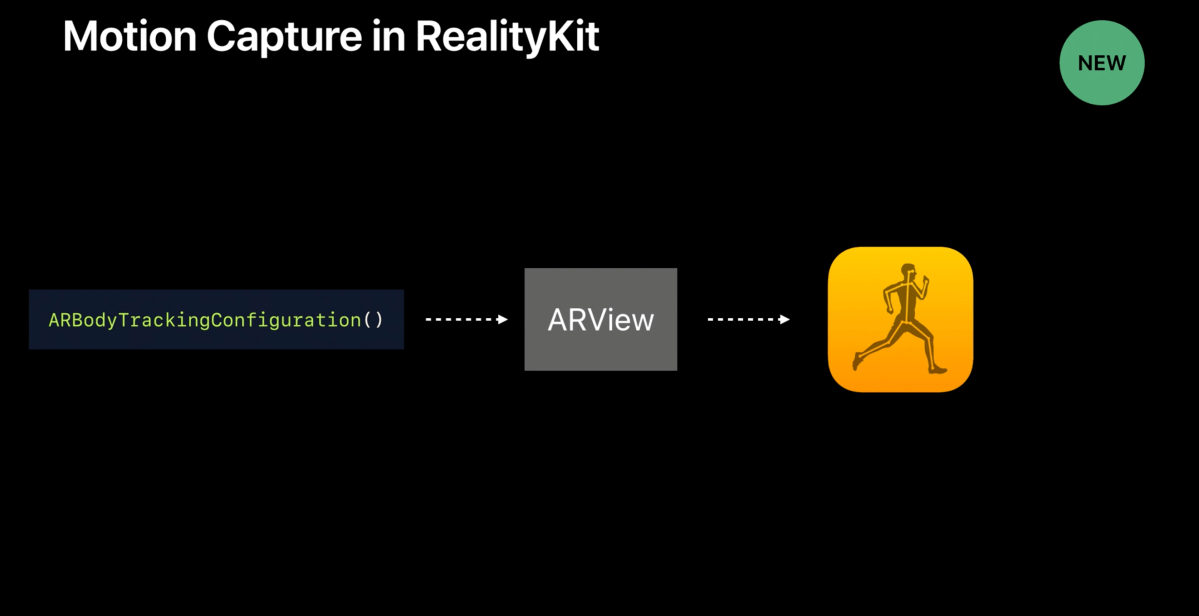

ARKit 3 has an ever-increasing scope, and of particular interest to me are those AR features which under the hood rely upon machine learning, namely Motion Capture.

Today, ARKit 3 uses raycasting as well as ML Based Plane Detection on awake or when the app using ARKit 3 is initially opened in order to place the floor, for example.

Check the video below. In it, I’m standing in front of my phone which is propped up on a table.

In this video, I’m using motion capture via an iPhone XR. My phone is sitting on a surface (namely the table) that it has determined is the floor plane, and as a result, you’ll notice that our avatar, once populated into the scene, has an incorrect notion of where the ground is.

It’s the hope that new ToF sensor technology will allow for a robust and complete understanding of the layout of objects in the room and the floor. Such that, for the same context, the device is able to tell that it is sitting on a table yet, the floor is not that plane but the one further away in the real world scene before it.

Source:

The Verge, “Apple’s future iPhone might add a time-of-flight camera — here’s what it could do”