https://daringfireball.net/2024/01/the_vision_pro

The following is a reblog from John Gruber.

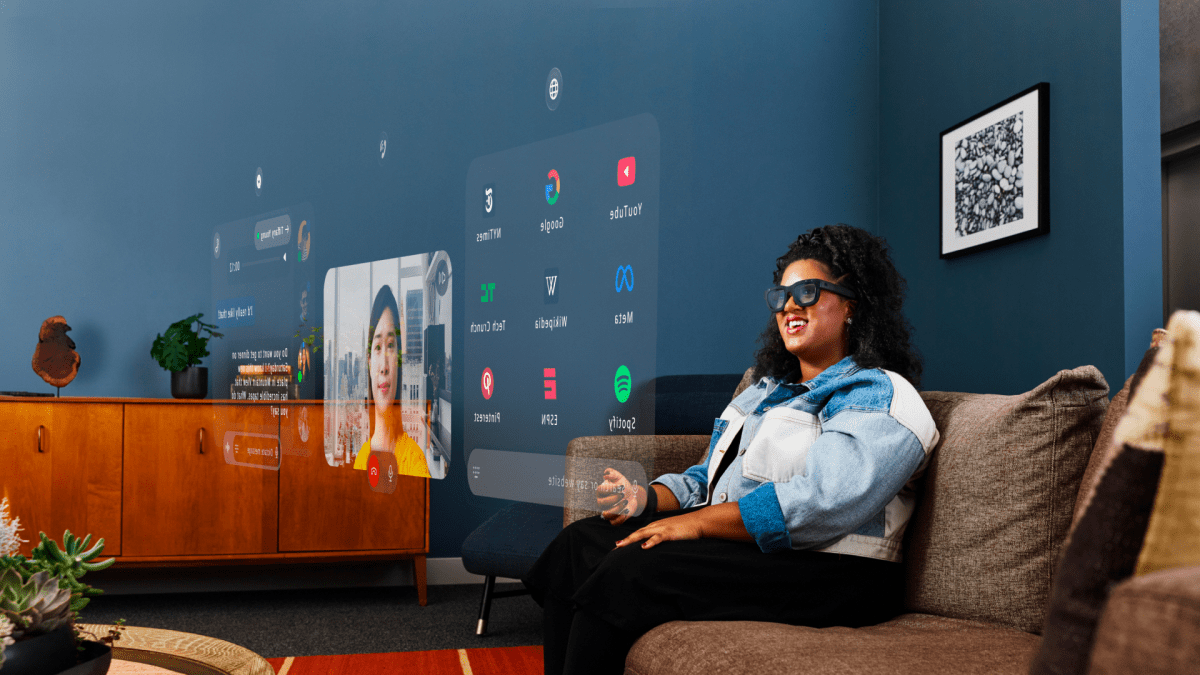

For the last six days, I’ve been simultaneously testing three entirely new products from Apple. The first is a VR/AR headset with eye-tracking controls. The second is a revolutionary spatial computing productivity platform. The third is a breakthrough personal entertainment device.

A headset, a spatial productivity platform, and a personal entertainment device.

I’m sure you’re already getting it. These are not three separate devices. They’re one: Apple Vision Pro. But if you’ll pardon the shameless homage to Steve Jobs’s famous iPhone introduction, I think these three perspectives are the best way to consider it.

THE HARDWARE

Vision Pro comes in a surprisingly big box. I was expecting a package roughly the dimensions of a HomePod box; instead, a Vision Pro retail box is quite a bit larger than two HomePod boxes stacked atop each other. (I own more HomePods than most people.)

There’s a lot inside. The top half of the package contains the Vision Pro headset itself, with the light seal, a light seal cushion, and the default Solo Knit Band already attached. The lower half contains the battery, the charger (30W), the cables, the Dual Loop Band, the Getting Started book (which is beautifully printed in full color, on excellent paper — it feels like a keepsake), the polishing cloth1, and an extra light seal cushion.

To turn Vision Pro on, you connect the external battery pack’s power cable to the Vision Pro’s power connector, and rotate it a quarter turn to lock it into place. There are small dots on the headset’s dime-sized power socket showing how to align the cable connector’s small LED. The LED pulses when Vision Pro turns on. (I miss Apple’s glowing power indicator LEDs — this is a really delightful touch.) When Vision Pro has finished booting and is ready to use, it makes a pleasant welcoming sound.

Then you put Vision Pro on. If you’re using the Solo Knit Band, you tighten and loosen it using a dial on the band behind your right ear. VisionOS directs you to raise or lower the headset appropriately to position it at just the right height on your face relative to your eyes. If Vision Pro thinks your eyes are too close to the displays, it will suggest you switch to the “+” size light seal cushion. You get two light seal cushions, but they’re not the same: mine are labeled “W” and “W+”. The “+” is the same width, to match your light seal, but adds a wee bit more space between your eyes and the displays inside Vision Pro. For me the default (non-“+”) one fits fine.

The software then guides you through a series of screens to calibrate the eye tracking. It’s all very obvious, and kind of fun. It’s almost like a simple game: you stare at a series of dots in a circle, and pinch your index finger and thumb as you stare at each one. You go through this three times, in three different artificial lighting conditions: dark, medium, and bright. Near the end of the first-run experience, you’re prompted to bring your iPhone or iPad nearby, just like when setting up a new iPhone or iPad. This allows your Vision Pro to get your Apple ID credentials and Wi-Fi password without entering any of that manually. It’s a very smooth onboarding process. And then that’s it, you’re in and using Vision Pro.

There’s no getting around some fundamental problems with the Vision Pro hardware.

First is the fact that it uses an external battery pack connected via a power cable. The battery itself is about the width and height of an iPhone 15/15 Pro, but thicker. And the battery is heavy: about 325g, compared to 187g for an iPhone 15 Pro, and 221g for a 15 Pro Max. It’s closer in thickness and weight to two iPhone 15’s than it is to one. And the tethered power cable can be an annoyance. Vision Pro has no built-in reserve battery — disconnect the power cable from the headset and it immediately shuts off. It clicks firmly into place, so there’s no risk of accidentally disconnecting it. But if you buy an extra Vision Pro Battery for $200, you can’t hot-swap them — you need to shut down first.

Second is the fact that Vision Pro is heavy. I’ve used it for hours at a time without any discomfort, but fatigue does set in, from the weight alone. You never forget that you’re wearing it. Related to Vision Pro’s weight is the fact that it’s quite large. It’s a big-ass pair of heavy goggles on your face. There’s nothing subtle about it — either from your first-person perspective wearing it, or from the third-person perspective of someone else looking at you while you wear it.

One of Apple’s suggestions for adjusting the position of Vision Pro on your face is to balance the weight/pressure on your face equally between your forehead and your cheeks. I found this to be good advice. My instincts, originally, were to place it slightly too low on my face, which causes the system to advise positioning it higher. It needs to be positioned just right on your face both so that you see well through its displays, and so it can see your eyes for Optic ID, Vision Pro’s equivalent of Face ID for unlocking the device and confirming actions that require authentication (like accessing passwords from your keychain, or purchasing apps). Within a day or two, it became natural for me to put it on without needing to fuss with the fit.

The default stretchy Solo Knit Band not only works well for me, but I prefer it, comfort- and convenience-wise, to the Dual Loop Band. With the Solo Knit Band, you put it on and tighten it by twisting the aforementioned dial. When you take it off, you loosen it first. You want it tight enough on your face that it isn’t practical not to have to tighten/loosen it each time you put it on/take it off. It’s a bit like getting accustomed to a new watch strap — at first it feels finicky, but you quickly develop muscle memory. In addition to learning how high to place Vision Pro on your face, playing around with how high the Solo Knit Band should go across the back of your head is essential for getting a consistent fit.

Why does Vision Pro come with the Dual Loop Band, which is an altogether different design? Apple’s Getting Started guide describes its purpose with a wonderful euphemism: “Apple Vision Pro also comes with a Dual Loop Band, which is a great option if you want a different fit.” Translation: You should try it if the Solo Knit Band isn’t comfortable. Vision Pro is an extraordinarily personal device. It’s not just on your face, which is incredibly sensitive to feel and touch, but it’s heavy and requires precise alignment with your eyes. You also really want the light seal to, well, seal out light. This Reddit post suggests there are 28 different sizes for the light seal. 28! (The N’s and W’s, I presume, are for narrow and wide.) Depending on the shape of your face, size of your head, and volume of hair, the Solo Knit Band might not work well. The Dual Loop Band has two velcro straps — one across the back of your head, and one that goes across the top. People who use the Dual Loop Band will probably need to loosen and tighten both straps each time they take Vision Pro on and off.

If it all sounds a little fussy, that’s because it is. But there’s no way around it: it requires a precise fit both for comfort and optical alignment.

Many have noted that for a product from a company that has pushed fitness-related devices (Watch) and services (Fitness+), there is no fitness-related marketing angle for Vision Pro. It’s simply too heavy. No one wants to exert themselves with a 650g device strapped to their face. Someday Apple will make a fitness-suitable Vision headset; this Vision Pro is not it.

Another aspect that takes some getting used to is simply handling Vision Pro. You need to learn to hold it via the aluminum frame around the device itself, not the light seal. Try to hold it or pick it up by the light seal and the seal will pop off. The light seal attaches to Vision Pro magnetically, and the light seal cushion attaches to the seal magnetically. They’re easy to attach and detach, and snap into place automatically — but they will detach if you use the light seal (or the cushion) to pick up the combined unit. (Zeiss lens inserts for glasses-wearers also pop into place magnetically, and are trivial to insert and remove.)

You don’t need to put Vision Pro to sleep before taking it off, nor wake it up when putting it on. You just take it off and put it on, and the system detects whether you’re using it automatically. You can just leave the battery attached permanently.

I suspect the front face of Vision Pro is easily scratched. This is a device that demands to be handled with a degree of care. Apple’s instructions advise putting the cover on each time you’re done using it, like putting the cap back on a bottle of a fizzy beverage when you’re done drinking. I don’t think it’s delicate, per se, but it is most certainly not rugged.

My review kit included Apple’s $200 travel case. As with the Vision Pro retail box, I found it surprisingly large. It will consume much of the internal volume inside most laptop backpacks — and in fact, the travel case all by itself is roughly the size of a small child’s backpack. I very much look forward to using Vision Pro while traveling, but it’s something you’ll need to plan your packing around.2

In a short post two weeks ago — before I had this unit to review at home, but after another hands-on demo with Apple in New York — I wrote:

Almost every first-generation product has things like this — glaring deficiencies dictated by the limits of technology. The original Mac had far too little RAM (128 KB) and far too little storage (a single 400 KB single-sided floppy disk drive). The original iPhone only supported 2G EDGE cellular networking, which was unfathomably slow and didn’t work at all while you were on a voice call. The original Apple Watch was very slow and struggled to last a full day on a single charge. The external battery pack — which only supplies 2 to 2.5 hours of battery life — is that for this first-gen Vision Pro. Also, the Vision Pro headset itself — without any built-in battery — is still too big and too heavy.

Paul Graham has a wonderful adage:

Don’t worry what people will say. If your first version is so impressive that trolls don’t make fun of it, you waited too long to launch.

Vision Pro isn’t even in stores yet and it’s already subject to mockery. (So was the iPhone before it shipped; so was the original Macintosh.) In a few years, after a few product generations, we will all look back at this first Vision device and laugh. We’ll laugh at the external battery, we will laugh at the size and weight of the device, and eventually we will laugh at its price. The knocks against it are all undeniably true: it’s too heavy and too big for everyone, and too expensive for the mass market.

But, like that original iPhone and the original Macintosh before it, this first Vision Pro is no joke.

THE VISIONOS PLATFORM

Back in June, after getting a 30-minute demo of Vision Pro at WWDC, I wrote:

Apple is promoting the Vision Pro announcement as the launch of “the era of spatial computing”. That term feels perfect. It’s not AR, VR, or XR. It’s spatial computing, and some aspects of spatial computing are AR or VR.

To me the Macintosh has always felt more like a place than a thing. Not a place I go physically, but a place my mind goes intellectually. When I’m working or playing and in the flow, it has always felt like MacOS is where I am. I’m in the Mac. Interruptions — say, the doorbell or my phone ringing — are momentarily disorienting when I’m in the flow on the Mac, because I’m pulled out of that world and into the physical one. There’s a similar effect with iOS too, but I’ve always found it less profound. Partly that’s the nature of iOS, which doesn’t speak to me, idiomatically, like MacOS does. I think in many ways that explains why I never feel in the flow on an iPad like I can on a Mac, even with the same size display. But with the iPhone in particular screen size is an important factor. I don’t think any hypothetical phone OS could be as immersive as I find MacOS, simply because even the largest phone display is so small. Watching a movie on a phone is a lesser experience than watching on a big TV set, and watching a movie on even a huge TV is a lesser experience than watching a movie in a nice theater. We humans are visual creatures and our field of view affects our sense of importance. Size matters.

The worlds, as it were, of MacOS and iOS (or Windows, or Android, or whatever) are defined and limited by the displays on which they run. If MacOS is a place I go mentally when working, that place is manifested physically by the Mac’s display. It’s like the playing field, or the court, in sports — it has very clear, hard and fast, rectangular bounds. It is of fixed size and shape, and everything I do in that world takes place in the confines of those display boundaries.

VisionOS is very much going to be a conceptual place like that for work. But there is no display. There are no boundaries. The intellectual “place” where the apps of VisionOS are presented is the real-world place in which you use the device, or the expansive virtual environment you choose. The room in which you’re sitting is the canvas. The whole room. The display on a Mac or iOS device is to me like a portal, a rectangular window into a well-defined virtual world. With VisionOS the virtual world is the actual world around you.

In the same way that the introduction of multitouch with the iPhone removed a layer of conceptual abstraction — instead of touching a mouse or trackpad to move an on-screen pointer to an object on screen, you simply touch the object on screen — VisionOS removes a layer of abstraction spatially. Using a Mac, you are in a physical place, there is a display in front of you in that place, and on that display are application windows. Using VisionOS, there are just application windows in the physical place in which you are. On Monday I had Safari and Messages and Photos open, side by side, each in a window that seemed the size of a movie poster — that is to say, each app in a window that appeared larger than any actual computer display I’ve ever used. All side by side. Some of the videos in Apple’s Newsroom post introducing Vision Pro illustrate this. But seeing a picture of an actor in this environment doesn’t do justice to experiencing it firsthand, because a photo showing this environment itself has defined rectangular borders.

This is not confusing or complex, but it feels profound.

That might be the longest blockquote I’ve ever included in an article. But after nearly a week using Vision Pro, I can’t put it any better now than I did then. My inkling after that first 30-minute experience was exactly right.

This first-generation Vision Pro hardware is severely restricted by the current limits of technology. Apple has pushed those limits in numerous ways, but the limits are glaring. This Vision Pro is a stake in the ground, defining the new state of the art in immersive headset technology. But that stake in the ground will recede in the rear view mirror as the years march on. Just like the Mac’s 9-inch monochrome 512 × 342 pixel display. Just like the iPhone’s EDGE cellular modem.

But the conceptual design of VisionOS lays the foundation for an entirely new direction of interaction design. Just like how the basic concepts of the original Mac interface were exactly right, and remain true to this day. Just like how the original iPhone defined the way every phone in the world now works.

There is no practical way to surround yourself with multiple external displays with a Mac or PC to give yourself a workspace canvas the size of the workspace in VisionOS. The VisionOS workspace isn’t infinite, but it feels as close to infinitely large as it could be. It’s the world around you.

And it is very spatial. Windows remain anchored in place in the world around you. Set up a few windows, then stand up and walk away, and when you come back, those windows are exactly where you left them. It is uncanny to walk right through them. (From behind, they look white.) You can do seemingly crazy things like put a VisionOS application window outside a real-world window.

Windows also retain their positions relative to each other. A single press of the digital crown button brings up the VisionOS home view. A long-press of the digital crown button recenters your view according to your current gaze. So, for example, if everything seems a little too low in your view, look up, then press-and-hold the digital crown. All open windows will re-center in your current field of view. But this also works when you stand up and move.

You can start working in, say, your kitchen. Open up Messages, Safari, and Notes. Arrange the three windows around you from left to right. Stand up and walk to your living room. Those windows remain in your kitchen. Sit down on your sofa, and press-and-hold the digital crown. Now those windows move to your living room, re-centered in your current gaze — but exactly in the same positions and sizes relative to each other. It’s like having a multiple display setup that you can easily move to wherever you want to be.

Decades of Mac use has trained me to think that window controls are at the top of a window. In VisionOS they’re at the bottom. This took me a day or two to get accustomed to — when I think “I want to close this window”, my eyes naturally go to the top left corner. VisionOS windows really only have three controls: a close button and a “window bar” underneath, and a resizing indicator at each corner. Once you start using it, it’s easy to see why Apple put the window bar at the bottom instead of the top: if it were at the top, your hand and arm would obscure the window contents as you drag a window around to move it. With the bar at the bottom, window contents aren’t obscured at all while moving them.

Part of what makes the VisionOS workspace seem so expansive is that it’s utterly natural to make use of the Z-axis (depth). While dragging a window, it’s as easy to pull it closer, or push it farther away, as it is to move it left or right. It’s also utterly natural to rotate them, to arrange windows in a semicircle around you. It’s thrillingly science-fiction-like. The Mac, from its inception 40 years ago, has always had the concept of a Z-axis for stacking windows atop each other, but with VisionOS it’s quite different. In VisionOS, windows stay at the depth where you place them, whereas on the Mac windows pop to the front of the stack when you activate them.

Long-tap on a window’s close button and you get the option to close all other open windows, à la the Mac’s “Hide Others” command in the application menu. (Long-tapping is useful throughout VisionOS.)

The pass-through view of the real world around you means you can stand up and walk around while wearing Vision Pro. It doesn’t feel at all unsafe or disorienting. In fact it’s uncannily natural. But for using Vision Pro, it’s clearly intended that you be stationary, sitting or standing in a fixed position. Other than using Vision Pro as a camera, I can’t think of a reason to use it while walking about. Application windows are not fixed in position relative to you — they’re fixed in position relative to the world around you.

Apple’s Guided Tour video does a better job than any written description could to convey the basic gist of using the platform. The main thing is this: you look at things, and tap your index finger and thumb to “click” or grab the thing you’re looking at. It sounds so simple and obvious, but it’s a breakthrough in interaction design. The Mac gave us “point and click”. The iPhone gave us “tap and slide”. Vision Pro gives us “look and tap”. The one aspect of this interaction model that isn’t instantly intuitive is that — with a few notable exceptions — you don’t reach out and poke the things you see directly. You’ll want to at first, even after reading this. But it doesn’t work for most things, and would quickly grow tiresome even if it did. Instead you really do just keep your hands on your lap or on your table top — wherever they’re most comfortable in a resting position.

One of the notable exceptions are VisionOS’s virtual keyboards. (Keyboards, plural, to include both the QWERTY typing keyboard and the numeric keypad for entering your device passcode, if Optic ID for some reason fails.) VisionOS virtual keyboards work both ways — you can gaze at each key you want to press and tap your finger and thumb to activate them in turn, or you can reach out and poke at the virtual keys. Either method is fine for entering a word or two (or a 6-digit passcode); neither method is good for actually writing. Siri dictation works great for longer-form text entry, but if you want to do any writing at all without dictation, you’ll want to pair a Bluetooth keyboard. The virtual keyboard is better than trying to type on an Apple Watch, but not by much.

One uncanny aspect to using a Bluetooth keyboard is that VisionOS presents a virtual HUD over the keyboard. This HUD contains autocomplete suggestions and also shows you, in a single line, the text you’re typing. This is an affordance for people who can’t type without looking at their keyboard. On a Mac or iPad with a physical keyboard, you really only have to move your eyes to go from looking at your display to your keyboard. On VisionOS, you need to move your head, because windows tend to be further away from you, and higher in space. This little HUD lets you see what you’re typing while keeping your eyes on the physical keyboard. It’s weird but in a good way. One week in and it still brings a smile to my face to have a physical keyboard with tappable autocomplete suggestions.

VisionOS also works great with a Magic Trackpad or Bluetooth mouse. The mouse cursor is a circle, just like the one in iPadOS. You don’t see the cursor in the space between windows; it just appears inside whichever window you’re looking at.

The Mac Virtual Display feature is both useful and almost startlingly intuitive. If you have a MacBook, you just open the MacBook lid and VisionOS will present a popover, just atop the MacBook’s open display, with two buttons: “Connect” and a close button. Tap the Connect button and you get a virtual Studio-display-size-ish Mac display. You can move and resize this window like any other in VisionOS. And while you can’t increase the number of pixels it represents, you can upscale it to be very large. It’s very usable, and while connected, your MacBook’s keyboard and trackpad work in VisionOS apps too. You can also initiate Mac Virtual Display mode manually, using Control Center in VisionOS (which you access by tapping a small chevron at the top of your view, looking up toward the ceiling or sky).

I’ve used this mode quite a bit over the last week, and the only hiccup is that I continually find myself wanting to use VisionOS’s “stare and tap” interaction with elements inside the virtual Mac display. That doesn’t work. So while my hands are on the physical keyboard and trackpad, everything works across both the Mac (inside the virtual Mac display) and VisionOS apps, but when my hands are off the physical trackpad, and I’m finger-to-thumb tapping away in VisionOS application windows, every single time I turn my attention back to a Mac app in the virtual Mac display, I find myself futilely finger-to-thumb tapping to activate or click whatever I’m looking at. This is akin to switching from an iPad to a MacBook and trying to touch the screen, but worse, because with the virtual Mac display in VisionOS, you’re continuously context switching between the Mac environment and VisionOS apps. The trackpad works perfectly in both; look-and-tap only works in VisionOS (or merely to move, resize, or activate the virtual Mac display — not to interact with the Mac UI therein).

VisionOS is already on version 1.0.1 (a software update from version 1.0.0 was already available when I set it up), and has a bunch of 1.0 bugs. I had to force quit apps — including Settings — a few times. (Press and hold both the top button and digital crown button for a few seconds, and you get a MacOS-style Force Quit dialog; keep holding both buttons down and you can force a system restart.)

There are not a lot of native VisionOS apps yet, but iPad apps really do work well. The main difference between native VisionOS apps and iPad apps isn’t that VisionOS apps work better, so much as that they simply look a lot cooler. VisionOS is, to my eyes, the best-looking OS Apple has made since the original skeuomorphic iPhone interface in iOS 1–6. Actual depth and shading — what an idea.

The apps on VisionOS’s home view are not manually organizable — a curious omission even in a 1.0 release. (Especially so given that Apple is bragging about having zillions of compatible iPad apps available.) All iPad apps — including a bunch of built-in apps from Apple itself, including News, Books, Calendar, and Maps — are put in a “Compatible Apps” folder, and have squircle-shaped icons. Native VisionOS apps are at the root level of the apps view, and have circular icons.

The fundamental interaction model in VisionOS feels like it will be copied by all future VR/AR headsets, in the same way that all desktop computers work like the Mac, and all phones and tablets now work like the iPhone. And when that happens, some will argue that of course they all work that way, because how else could they work? But personal computers didn’t have point-and-click GUIs before the Mac, and phones didn’t have “it’s all just a big touchscreen” interfaces before the iPhone. No other headset today has a “just look at a target, and tap your finger and thumb” interface today. I suspect in a few years they all will.

THE HARDWARE, AGAIN, A/V EDITION

This brings me back to the hardware of Vision Pro. The displays are excellent, but I’m already starting to see how they aren’t good enough. The eye tracking is very good, but it’s not as precise as I’d like it to be. The cameras are good, but they don’t approach the dynamic range of your actual eyesight. There sometimes is color fringing at the periphery of your vision, depending on the lighting. A light source to your side, like a window in daytime, will show the fringing. When you move your head, the illusion of true pass-through is broken — you can tell that you’re looking at displays showing the world via footage from cameras. Just walking around is enough motion to break the illusion of natural pass-through of the real world. In fact, in some ways, the immersive 3D environments — mountaintops, lakesides, the surface of the moon (!) — are more visually realistic than the actual real world, because there’s less latency and shearing as you pan your gaze.

I’ve used the original PlayStation 5 VR headset, HTC Vive Pro, and own a Meta Quest 3. Vision Pro’s display quality makes those headsets seem like they’re from a different era. Vision Pro is in a different ballpark, playing a different game. In terms of resolution, Vision Pro is astonishing. I do not see pixels, ever. I see text as crisply as I do in real life. It’s very comfortable to read. (Although very weird, still, one week in, to have, say, a Safari window that appears 6 or 7 feet tall). But I can already imagine a better Vision headset display. I can already imagine lower latency between the camera footage and the displays in front of my eyes. I can already imagine greater dynamic range, like when looking out a window during daytime from inside a dim room.

Vision Pro’s displays are amazing, yet also obviously not good enough.

The speakers, on the other hand, are simply amazing. I’ve never experienced anything quite like them. I expected that they’d sound fine, but not as good as AirPods Pro or Max. Instead, I find they sound far better than any headphones I’ve ever worn. That’s because they’re actually speakers, optimally positioned in front of your ears. There’s always a catch, and the catch with Vision Pro’s speakers is that they’re not private at all. Someone sitting next to you can hear what you hear; someone near you can hear most of what you hear. When using Vision Pro in public or near others, you’ll want to wear AirPods both for privacy and courtesy — not audio quality.

The speakers also convey an uncanny sense of spatial reality.

Last, I’ve consistently gotten 3 full hours of battery life using Vision Pro on a full charge. Sometimes a little more. In my experience, Apple’s stated 2–2.5 hour battery life is a floor, not a ceiling. I also have suffered neither physical discomfort nor nausea in long sessions.3

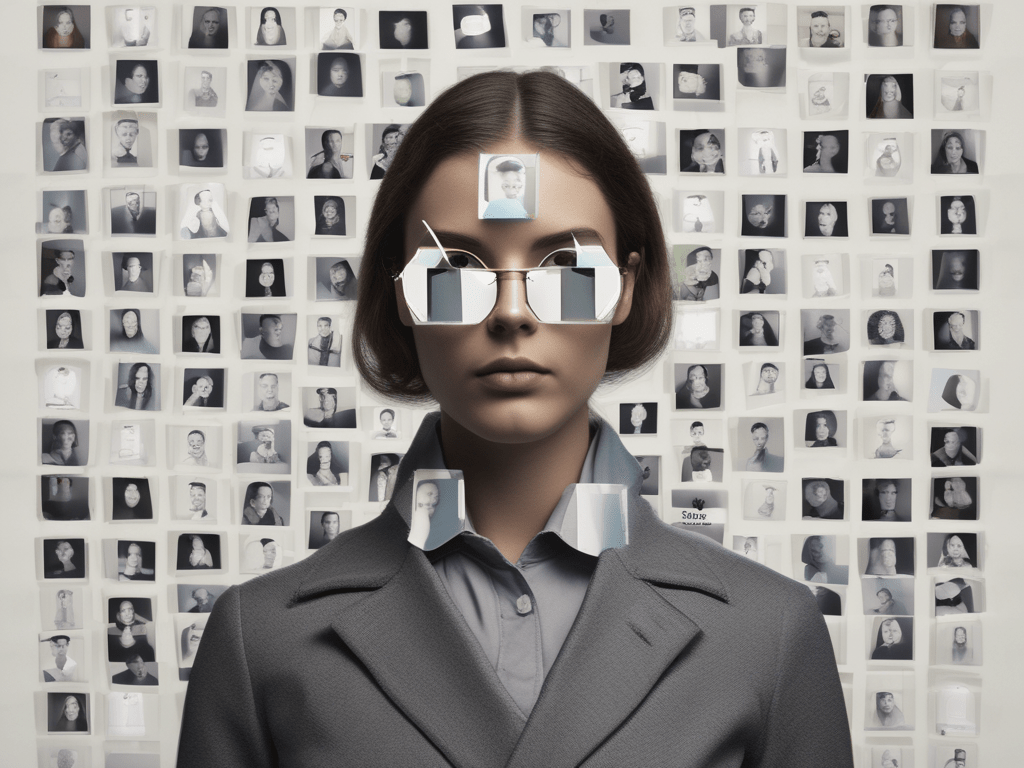

I’M SORELY TEMPTED, BUT SHALL RESIST, MAKING A ‘PERSONA NON GRATA’ PUN IN THIS SECTION HEADING

Personas are a highlight feature of Vision Pro. Your persona is a digital avatar of your head and shoulders that appears in your stead when making FaceTime calls, or using other video call software that adopts the APIs to use them. At launch that already includes Zoom, Webex, and Microsoft Teams.

Apple is prominently labeling the entire persona feature “beta”, and it doesn’t take more than a moment of seeing one to know why. Personas are weird. They are very deep in the uncanny valley. There is no mistaking a persona for the actual person. At times they seem far more like a character from a video game than a photorealistic visage. And at all times they seem somewhat like a video game character. My hair, for example, looks like a shiny plastic Lego hairpiece.

Even capturing your persona is awkward. You have to take Vision Pro off your head and turn it around so the cameras (and lidar sensor?) can see you, but you don’t get a good image of what the cameras are capturing, because the front-facing EyeSight display is relatively low resolution. Your hair might be messed up from the headband, and you’ll need to check yourself in an actual mirror to make sure your shirt is smooth.

I FaceTimed my wife after capturing mine, and her reaction — not really knowing at all what to expect — was “No, no, no — oh my god what is this?” And then she just started laughing. We concluded the test with her telling me, “Don’t ever call me like that again.” She was joking (I think), but personas are so deep in the uncanny valley that the first time anyone sees one, they’re going to want to talk about it.

Apple is in a tough spot with this feature. The feature clearly deserves the prominent “beta” label in its current state. But you can also see why Apple needed to include the feature at launch, no matter how far from “good” it is. You can’t ship a productivity computer today that can’t be used for video conferencing. It’s like email or web browsing: essential. But that leaves only two options: a cartoon-like Memoji avatar, or an attempt at photorealism. Apple almost certainly could have knocked a Memoji avatar out of the park for this purpose, but I think rightly decided that that would be utterly inappropriate in most professional work contexts. You could FaceTime your family and friends as a Memoji and they’d accept it without judgment, but not professional colleagues or clients.

In defense of personas as they exist right now, I’ve found that I do get used to them a few minutes into a call. (I’ve had a 30-minute FaceTime call with Vision-Pro-wearing reps from Apple, as well as calls with fellow reviewers Joanna Stern and Nilay Patel.) But I think they work best persona-to-persona — that is to say, between two (or more) people who are all using a Vision Pro. That’s obviously not going to be the case for most calls. This will get normalized, as more people buy and use Vision headsets, and as Apple races to improve the feature to non-beta quality. But for now, if you use it, expect to talk about it.

Your persona is also used for the presentation of your eyes in the EyeSight feature. EyeSight, in Apple’s product marketing, is a headline feature of Vision Pro. It’s prominently featured on their website and their advertisements. But in practice it’s very subtle. Here’s the best selfie I’ve been able to capture of myself showing EyeSight:

See original post for image.

For the record, my eyes were open when I snapped that photo.

EyeSight is not displayed most of the time that you’re using Vision Pro — it only turns on when Vision Pro detects a person in front of you (including when you look at yourself in a mirror). Most of the time you’re using Vision Pro, the front display shows nothing at all.

EyeSight is not an “Oh my god, I can see your eyes!” feature, but instead more of an “Oh, yes, now that you ask, I guess I can sort of see your eyes” feature. Apple seemingly went to great lengths (and significant expense) to create the EyeSight feature, but so far I’ve found it to be of highly dubious utility, if only because it’s so subtle. It’s like wearing tinted goggles meant to obscure you, not clear goggles meant to show your eyes clearly.

PERSONAL ENTERTAINMENT

I’ve saved the best for last. Vision Pro is simply a phenomenal way to watch movies, and 3D immersive experiences are astonishing. There are 3D immersive experiences in Vision Pro that are more compelling than Disney World attractions that people wait in line for hours to see.

First up are movies using apps that haven’t been updated for Vision Pro natively. I’ve used the iPad apps for services like Paramount+ and Peacock. Watching video in apps like these is a great experience, but not jaw-dropping. You just get a window with the video content that you can make as big as you want, but “big”, for these merely “compatible” apps, is about the size of the biggest wall in your room. This is true too for video in Safari when you go “full screen”. It breaks out of the browser window into a standalone video window. (Netflix.com is OK in VisionOS Safari, but YouTube.com stinks — it’s a minefield of UI targets that are too small for eye-tracking’s precision.)

Where things go to the next level are the Disney+ and Apple TV apps, which have been designed specifically for Vision Pro. Both apps offer immersive 360° viewing environments. Disney+ has four: “the Disney+ Theater, inspired by the historic El Capitan Theatre in Hollywood; the Scare Floor from Pixar’s Monsters Inc.; Marvel’s Avengers Tower overlooking downtown Manhattan; and the cockpit of Luke Skywalker’s landspeeder, facing a binary sunset on the planet Tatooine from the Star Wars galaxy.” With the TV app, Apple offers a distraction-free virtual theater.

What’s amazing about watching movies in these two apps is that the virtual movie screens look immense, as though you’re really in a movie theater, all by yourself, looking at a 100-foot screen. Apple’s presentation in the TV app is particularly good, giving you options to simulate perspectives from the front, middle, or back of the theater, as well as from either the floor or balcony levels. (Like Siskel and Ebert, I think I prefer the balcony.) The “Holy shit, this screen looks absolutely immense” effect is particularly good in Apple’s TV app. Somehow these postage-stamp-size displays inside Vision Pro are capable of convincing your brain that you’re sitting in the best seat in the house in front of a huge movie theater screen. (As immersive as the Disney+ viewing experience is, after using the TV app, I find myself wishing I could get closer to the big screen in Disney+, or make the screen even bigger.) I have never been a fan of 3D movies in actual movie theaters, but in Vision Pro the depth feels natural, not distracting, and you don’t suffer any loss of brightness from wearing what are effectively sunglasses.

And then there are the 3D immersive experiences. Apple has commissioned a handful of original titles that are available, free of charge as Vision Pro exclusives, at launch. I’m sure there are more on the way. There aren’t enough titles yet to recommend them as a reason to buy a Vision Pro, but what this handful of titles promises for the future is incredible. (I look forward, too, to watching sports in 3D immersion — but at the moment that’s entirely in the future. But hopefully the near future.)

But I can recommend buying Vision Pro solely for use as a personal theater. I paid $5,000 for my 77-inch LG OLED TV a few years ago. Vision Pro offers a far more compelling experience (including far more compelling spatial surround sound). You’d look at my TV set and almost certainly agree that it’s a nice big TV. But watching movies in the Disney+ and TV apps will make you go “Wow!” These are experiences I never imagined I’d be able to have in my own home (or, say, while flying across the country in an airplane).

The only hitch is that Vision Pro is utterly personal. Putting a headset on is by nature isolating — like headphones but more so, because eye contact is so essential for all primates. If you don’t often watch movies or shows or sports by yourself, it doesn’t make sense to buy a device that only you can see. Just this weekend, I watched most of the first half of the Chiefs-Ravens game in the Paramount+ app on Vision Pro, in a window scaled to the size of my entire living room wall. It was captivating. The image quality was a bit grainy scaled to that size (I believe the telecast was only in 1080p resolution), but it was better than watching on my TV simply because it was so damn big. But I wanted to watch the rest of the game (and the subsequent Lions-49ers game) with my wife, together on the sofa. I was happier overall sharing the experience with her, but damn if my 77-inch TV didn’t suddenly seem way too small.

Spatial computing in VisionOS is the real deal. It’s a legit productivity computing platform right now, and it’s only going to get better. It sounds like hype, but I truly believe this is a landmark breakthrough like the 1984 Macintosh and the 2007 iPhone.

But if you were to try just one thing using Vision Pro — just one thing — it has to be watching a movie in the TV app, in theater mode. Try that, and no matter how skeptical you were beforehand about the Vision Pro’s price tag, your hand will start inching toward your wallet.

- Which is a completely different — finer and softer — polishing cloth than the $19 Apple Polishing Cloth that comes with the Studio Display and Pro Display XDR. The polishing cloth for those displays is quite suede-like. The new Vision Pro polishing cloth is lightweight and fine, like the fabric from a silk blouse. ↩︎

- The travel case is also covered in white fabric that I suspect will easily stain and soil. It does not seem like a material that is meant to be put on the floor under an airplane seat, despite the fact that it is a product that is obviously going to be slid back and forth on the floor under airplane seats. I haven’t left the house with it, so perhaps this fabric is surprisingly stain-resistant, but my gut feeling is that this is one of those Apple products designed for an ideal, perfectly clean, uncluttered world that doesn’t exist outside of Apple marketing photography. ↩︎︎

- The Zeiss lens inserts for Vision Pro come with a QR-like code on a card. When you’re setting up Vision Pro, if you use lens inserts, there’s a point where you scan that code by looking at it. This pairing lets Vision Pro know exactly what your lens prescription is, and it adjusts its eye-tracking accordingly. The review unit package Apple sent to me included the correct lens inserts, but the incorrect code card for those lenses. This mistake was specific to my review unit, and, Apple told me, will not be a problem for customers. Apple emailed me to inform me of the mistake, and included the correct code to scan. But I spent the first two days of testing with lens inserts that weren’t correctly calibrated. Everything looked fine visually (because the lenses were correct for my prescription) but eye-tracking was off. Not unusable, but certainly less accurate than what I’d experienced in my numerous hands-on demos in the preceding months. And, the day before Apple notified me of the mistake and sent me the correct code for my lenses, after a few hours using Vision Pro, I developed a nasty headache and, eventually, a wee bit of nausea.After recalibrating with the correct code for my lenses, I had even longer sessions of continuous use, with no headache or discomfort, and absolutely no nausea. I include this anecdote here only as evidence that calibrating Vision Pro for your specific vision is seemingly essential.